The following project was my undergraduate research thesis for graduation with honors research distinction. You can find the published paper at: Knowledge Bank, Published 05/25. The project has since evolved to be my graduate research project. Updates to the material are currently still being incorporated.

This research project explores how quantized communication effects data-driven control of unmanned aerial vehicles (UAVs), focusing specifically on quadcopters. By integrating dither quantization into a Koopman operator-based Model Predictive Control (MPC) framework, the project investigates how reduced word length use conserves bandwidth and impacts system identification and flight performance. Using a multi-stage validation process including MATLAB simulations, Gazebo-based digital twin testing, and real-world experiments with the PX4 Starling Developer Kit, this work aims to bridge the gap between theoretical control models and their deployment on resource-constrained aerial platforms. The findings offer practical insights for designing reliable, low-bandwidth control systems in next-generation UAVs.

Background

Modern quadcopters are becoming increasingly reliant on data-driven control strategies to maintain stability and performance in complex environments. However, these systems are often deployed on embedded platforms with limited computational power, memory, and bandwidth, which poses challenges for real-time communication and control. One common method to address these constraints is quantization, the process of converting high-resolution continuous data into lower-bit discrete representations. While this technique reduces data transmission costs, it introduces quantization error, which can distort system identification and degrade control performance.

To mitigate this, dither quantization introduces random noise before quantizing and removes it afterward, helping to decorrelate the error from the signal and reduce bias. In this project, dither quantization is applied to both state and control data before they are used in training and deploying a Koopman-based MPC. The Koopman operator provides a linear surrogate model of the inherently nonlinear quadrotor dynamics by lifting the system into a higher-dimensional space using Extended Dynamic Mode Decomposition (EDMD). This allows for efficient real-time control even when the input data is coarsely quantized.

By systematically analyzing how quantization resolution impacts model accuracy and tracking performance, this work contributes to a growing body of research focused on deploying advanced control algorithms in low-resource environments.

Overview

Below are the key technical foundations that this research builds upon.

What is Quantization?

Quantization is the process of mapping a large set of input values to a smaller set of output values. This is critical in embedded systems where memory, processing power, or bandwidth are limited.

A uniform quantizer with resolution maps a real number to:

The resolution is based on bit-depth and the signal range:

In dither quantization, noise is added before quantizing and subtracted after:

This technique helps ensure that the quantization error is independent from the signal, which improves learning and control.

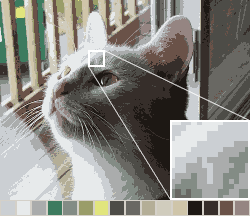

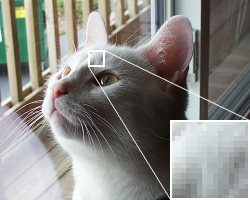

An example of quantization in image signal processing can be seen below. The image on the left shows the original image of a cat with full 24-bit RGB color array. A quantized version, down to 16 colors, is shown in the middle. And finally, a dither quantized version of the same image can be seen on the right.

While the quantized version of the image does degrade the RGB signals, you can still understand the image and background. When dithering, the error becomes independent of the true signal, and the colors are smoothed and banding is visibly reduced.

This example motivates the idea behind using this architecture in drone controls.

Koopman-Operator Theory

The Koopman operator lets us represent nonlinear systems as linear ones in a lifted feature space. For a system

We define observables , and apply the Koopman operator as:

This transforms nonlinear dynamics into linear evolution in a higher-dimensional space.

Model Predictive Control (MPC)

Model Predictive Control (MPC) computes optimal control actions by solving a constrained optimization problem over a future horizon. The controller solves:

subject to:

This formulation allows real-time optimization of control inputs under constraints.

Extended Dynamic Mode Decomposition (EDMD)

To build a Koopman model from data, we use Extended Dynamic Mode Decomposition with Control (EDMDc). Given snapshots of data, we solve:

Matrices and are learned from data and represent a linear model in the lifted space defined by .

Methods

The workflow follows the SciTech 2026 manuscript setup: identify a Koopman linear predictor with EDMDc from quantized state/input data, then deploy that predictor inside linear MPC and evaluate prediction and tracking performance versus quantization word-length.

Data-Driven Identification Setup

The quadrotor dynamics are modeled on SE(3) and lifted with the EDMDc dictionary used in the paper, with , giving a lifted observable dimension of 51.

For system identification, the paper uses:

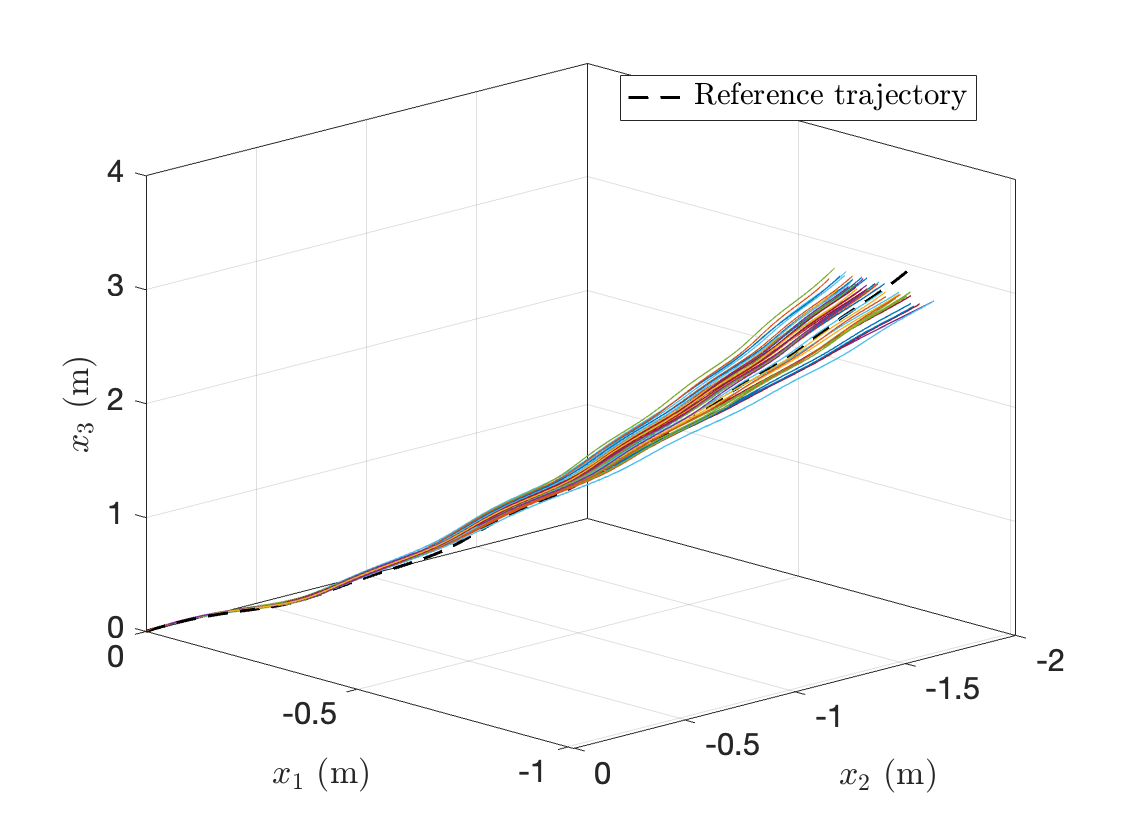

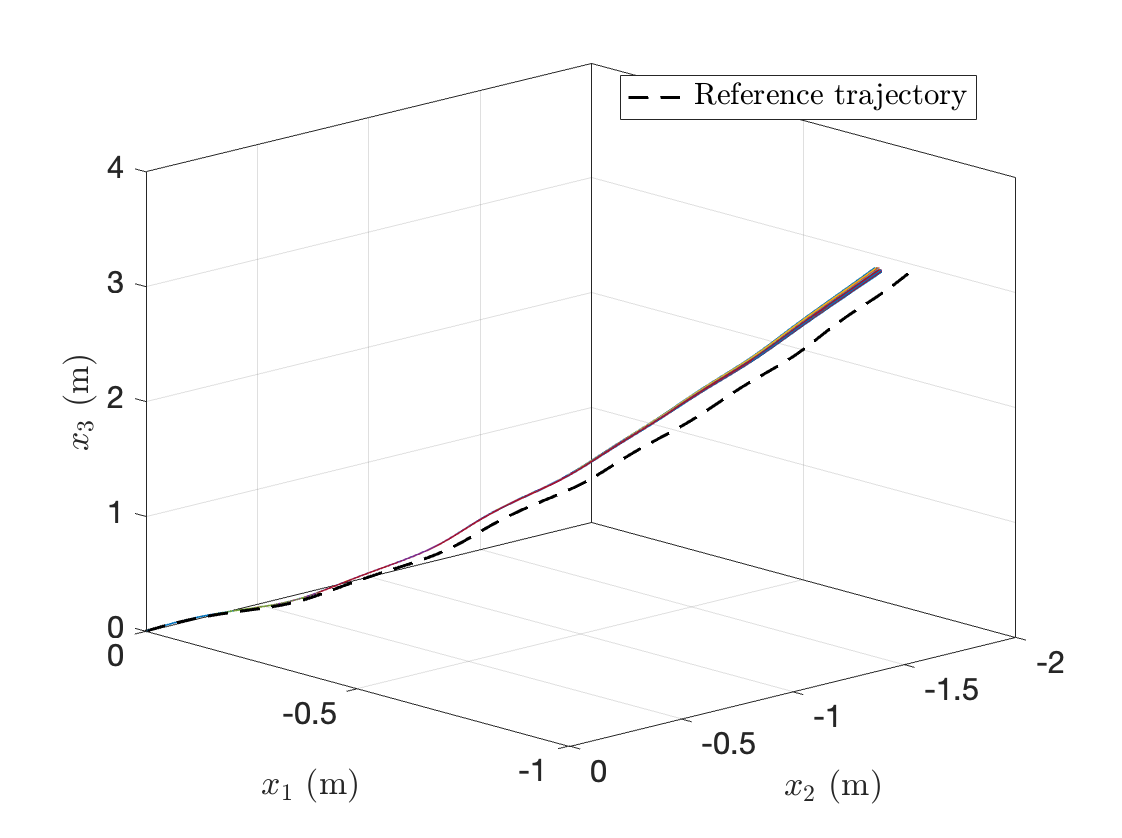

- 100 independent training trajectories ()

- Trajectory duration

- Sampling period

- Initial state fixed at , , , and

- Control input excitation sampled as zero-mean Gaussian with diagonal covariance

Unquantized stacked snapshots are used once to compute the baseline (“ground truth”) Koopman predictor matrices.

Dither Quantization Campaign

To evaluate bandwidth-constrained learning, dither quantization is applied entry-wise to both state and control measurements before identification. Quantizer bounds are built from min/max values in unquantized training data and slightly inflated to avoid saturation under dither.

For each tested word-length, the full identification/prediction/MPC pipeline is repeated across independent dither draws:

- Word-lengths: 4, 8, 12, 14, and 16 bits

- Dither realizations per word-length: 50

MPC and Evaluation Metrics

The learned predictor is embedded in linear MPC with:

- Prediction horizon: 10 steps ()

- Simulation window:

- Input penalty:

- Input constraints: each control channel bounded in (no saturation observed in reported runs)

The paper reports four primary metrics:

- Relative Koopman matrix error in :

- Relative Koopman matrix error in :

- One-step position prediction RMSE

- Closed-loop MPC position tracking RMSE

Results

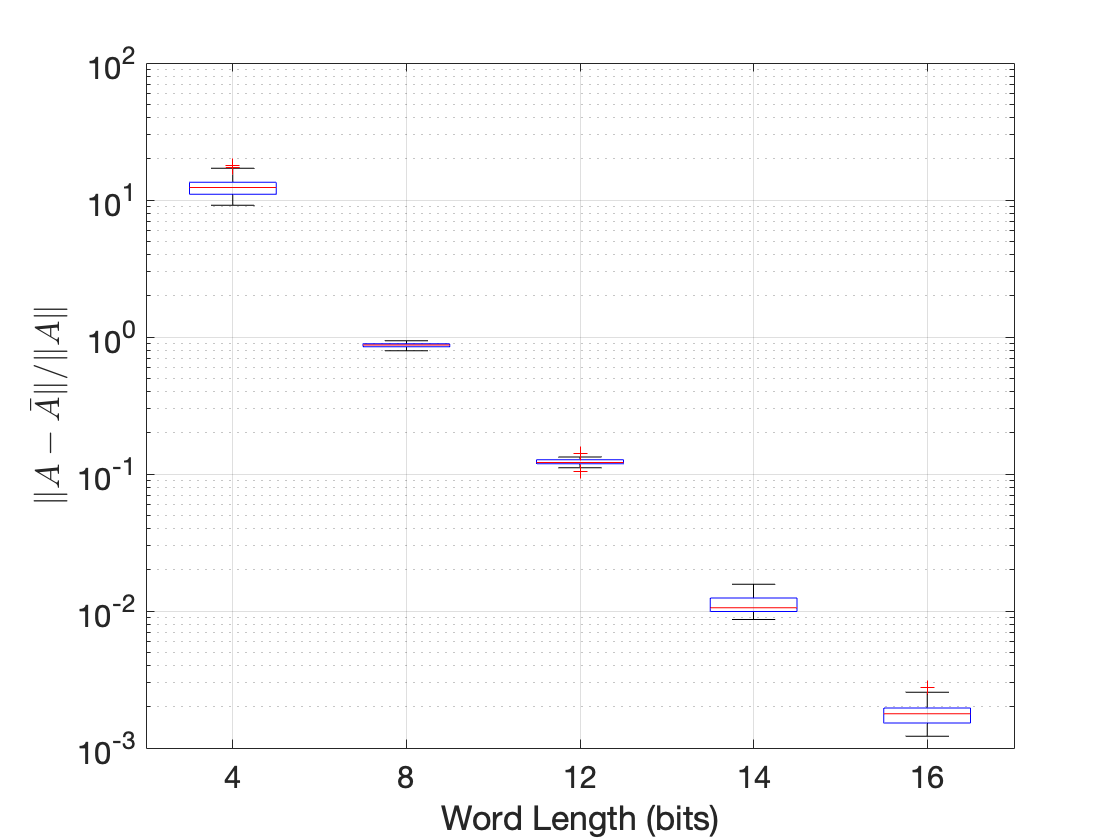

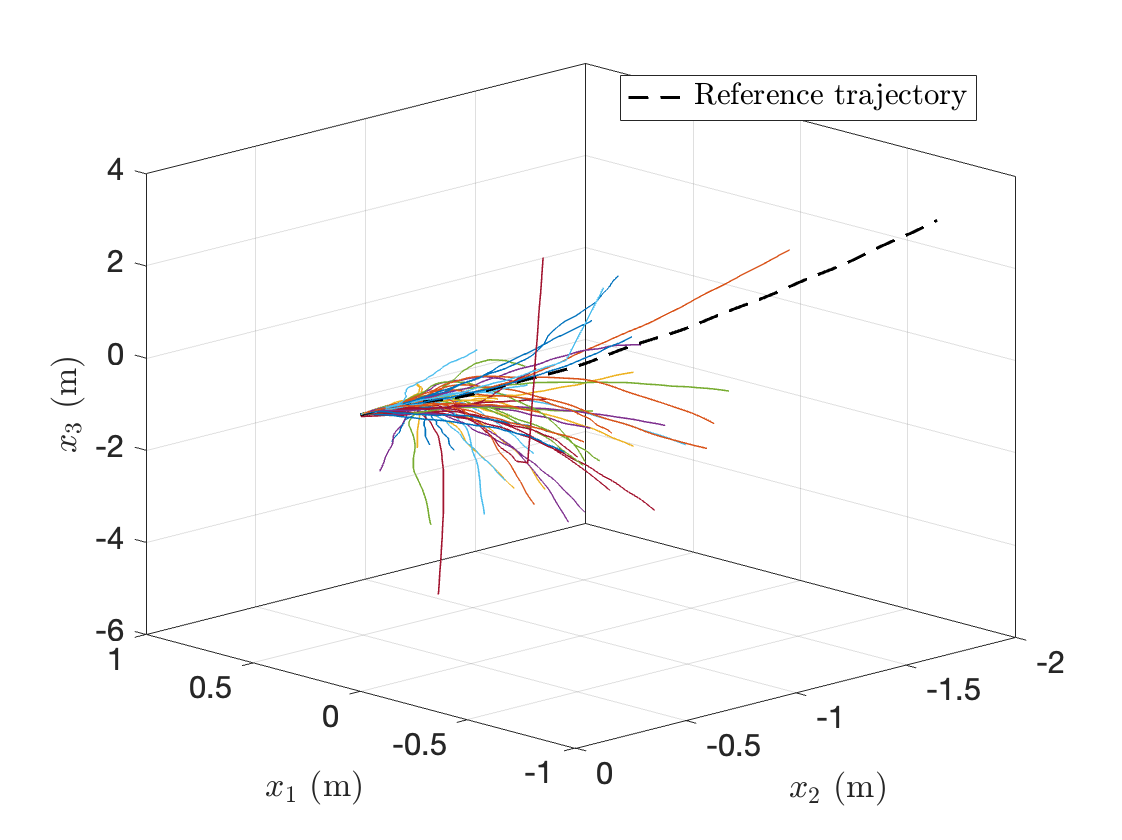

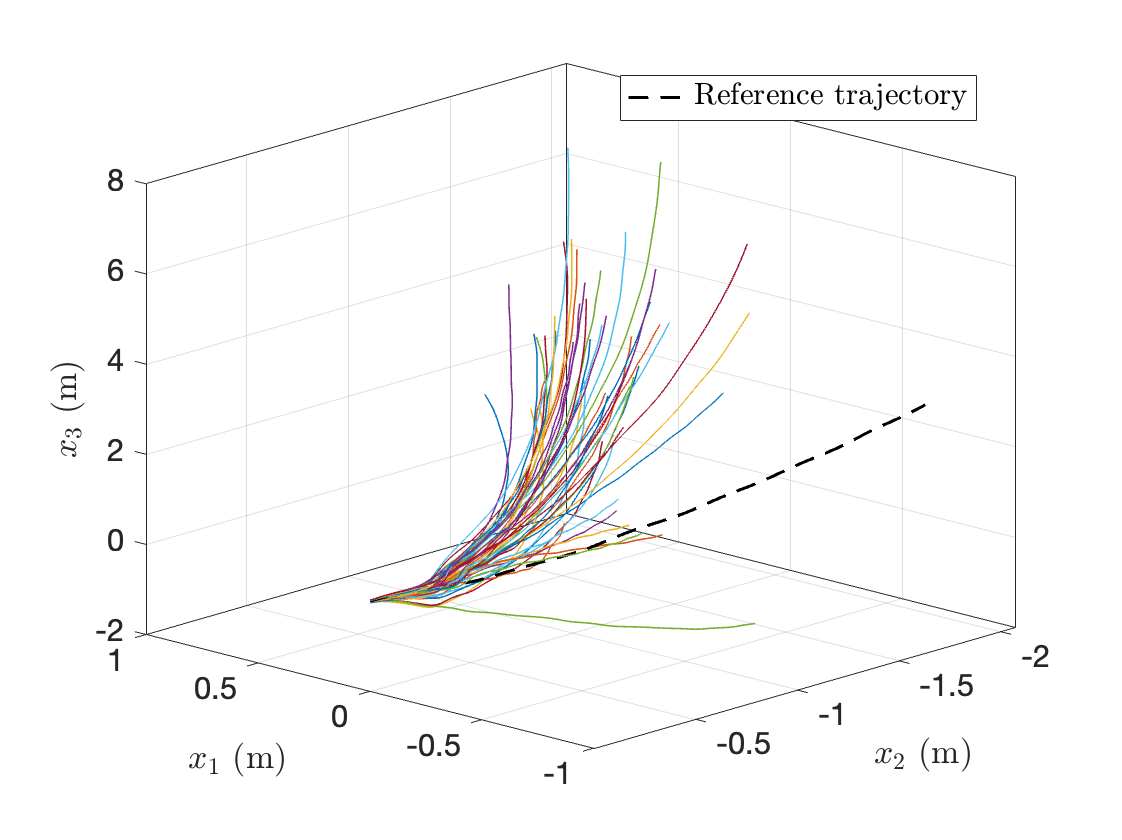

Across all experiments in the paper, lower word-length increased identification, prediction, and tracking error, while higher word-length consistently recovered performance.

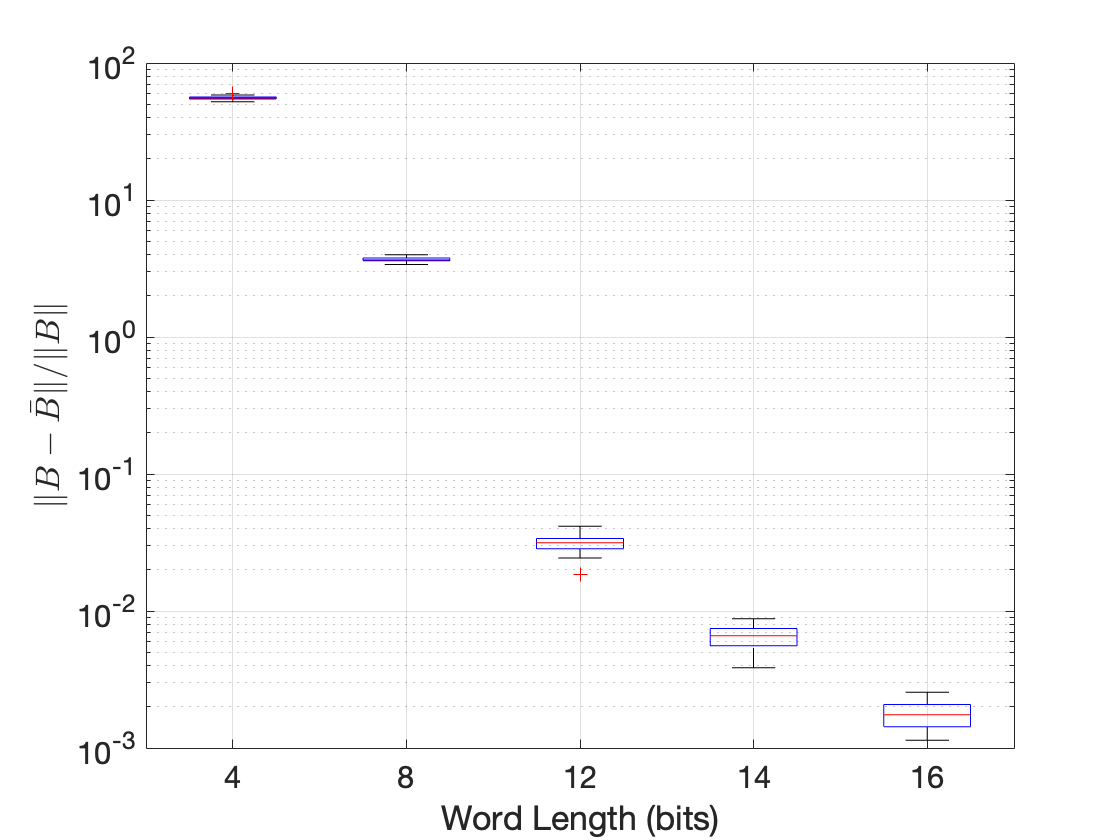

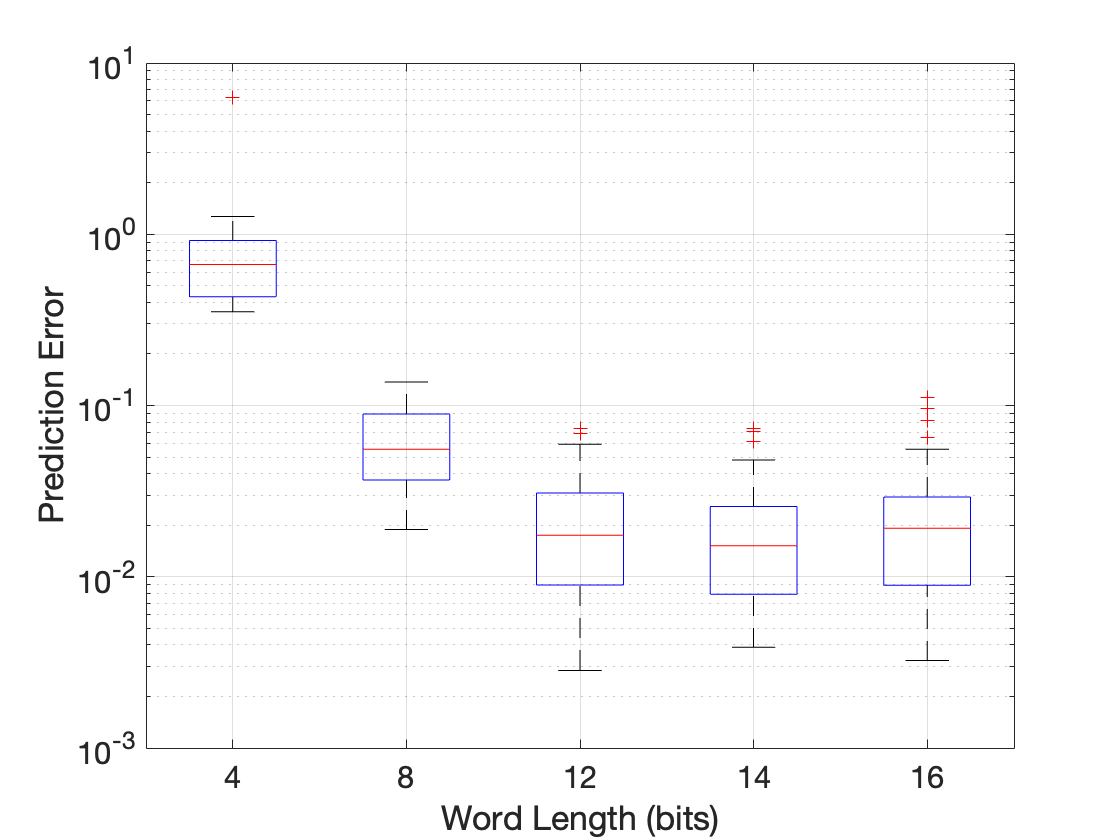

These plots capture the same trend reported in the paper: identification error in both and decreases with increasing word-length, and that improvement carries through to both prediction and closed-loop tracking. The clearest transition occurs from 8 to 12 bits, after which additional gains are smaller.

Quantitative results:

- Relative errors in the identified lifted matrices decrease as word-length increases; at 4 bits, both median error and spread are substantially larger than higher-resolution cases.

- One-step prediction RMSE follows the same trend: 12-16 bit predictors remain low-error with weak variation, while coarse quantization shows visibly larger error and variability across dither realizations.

- Closed-loop tracking shows the strongest transition between 8 and 12 bits. The paper reports the median MPC position RMSE dropping by roughly a factor of 3-4 from 8 to 12 bits, with a much tighter spread across realizations.

- Beyond ~12 bits, gains from additional precision are modest; the reported practical trade regime is about 12-14 bits, where performance is close to the unquantized baseline with much lower bandwidth per sample.